The ability to increase the performance of SATA interface has made most data centers slow to adopt NVMe technology. It's easier to increase capacity or increase the performance with higher IOPS or lower latency when you're using something slow as SATA. If you look at today’s data centers, most data center architects are focused on enhancing CPU utilization. With a rack full of expensive CPUs (whatever number of cores or licensing costs paid), data centers are rarely even capable of utilizing the CPUs to anywhere close to even thirty percent of their maximum capacity.

Imagine paying for a server room full of Ferraris only to end up stuck driving them at 20 mph. This isn’t a Ford vs. Ferrari moment, but rather an unleaded vs high octane moment.

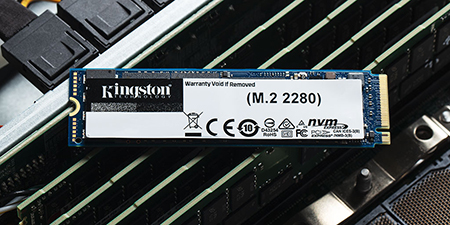

NVMe is driving new change with both transfer speeds and in-memory provisioning allowing you to double utilization from thirty to almost sixty percent. Using existing infrastructure, NVMe can get the CPUs to run more efficiently with lower latency and higher throughput. However, you must be able to accommodate NVMe. Limitations can include your existing backplanes or the inability to plug-in and replace with your current form factor. It becomes a larger overhaul.

To move from a SAS based system, the architecture of the server must change unless you use an adaptor to get NVMe SSDs onto the PCIe bus. It will be a full platform change for a customer. Compared to using SATA and SAS hardware-based host controllers, the PCIe interface is software-defined and delivers higher efficiency to dedicated processes. It is astounding how NVMe provides low latency and the CPUs ability to multithread.

Now, the next question you might ask is, “What’s more important today? Upgrading the entire car, or just supercharging the engine?"

For most data center managers, the change is going to be gradual – starting with slight upgrades like the Kingston DC1500M and DC1000B.